Featured Resource

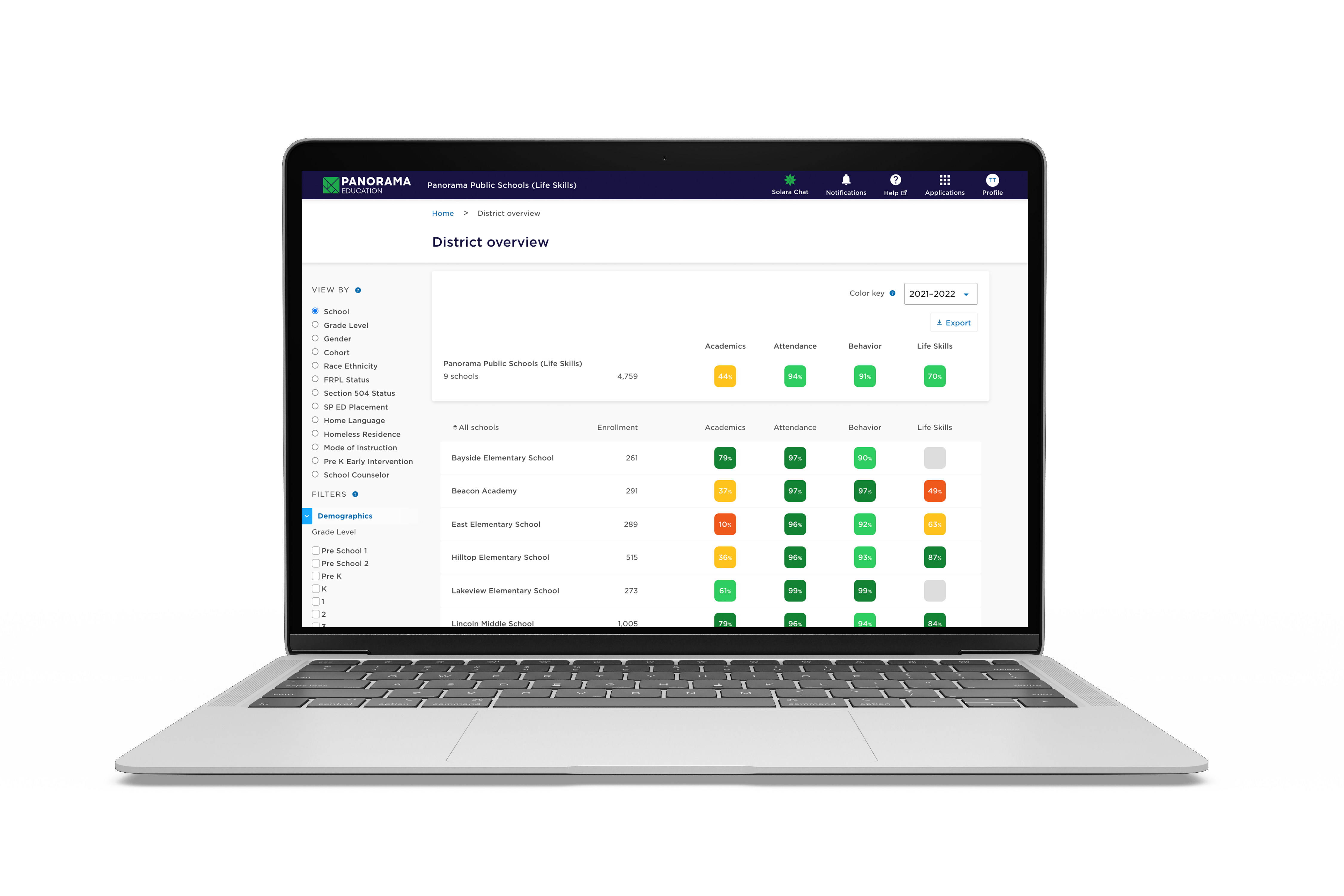

Panorama Student Survey

Elevate student voice on school climate, teaching and learning, relationships, and belonging.

Download NowPOPULAR POSTS

101 Inclusive Get-to-Know-You Questions for Students [+ PDF Download]

Popular

Your District Needs a Family Engagement Survey + 21 Questions To Ask

Family Engagement

Using Panorama Surveys to Empower Students at Highline Public Schools

Success Stories

17 Questions School Leaders Can Ask to Support Teacher Well-Being

Social-Emotional Learning

Get notified about new resources and articles

Join 90,000+ education leaders on our weekly newsletter.

Join our community of 90,000+ educators to get the latest news, events, and resources delivered straight to your inbox.

Subscribe

Get the latest news, events, and resources for education leaders.